Run GitLab under Docker

My current project includes migrating my corporate site at will between cloud providers – including my own personal dev environment. So I could use OpenShift, possibly Cloud9, etc. but I already have so much running fine as a standalone VM. What I really want to do is to make sure I can provision my web site automatically…and I choose Git in conjunction with Puppet!

More specifically – I will use GitLab as a web front-end and to manage team access to the web site source, and so I want to run GitLab in my dev environment. But…I want to run GitLab as a Docker VM. And my dev environment is based on Mac OS X – and that is a problem! According to numerous posts it can’t be solved without an NFS mount (for example, see https://github.com/docker-library/postgres/issues/60). But I’m here to tell you that yes, you can run GitLab (including the PostgreSQL backend) under Docker under the Mac. And – by extension – easily on any other system.

The problem is that when you run docker-machine under the Mac (or Windows) you – of course – are running a proxy VM for the Docker hypervisor (in my case, via VirtualBox on my Mac). Moreover, docker-machine will permit you to mount host directories to the managed VM (and get /Users mounted automatically) – but you are unable to manage permissions from the VM. So when you install / run GitLab from Sameer Naik’s excellent work you will run into the problem of PostgreSQL trying to change ownership on mounted file volumes. It simply doesn’t work under docker-machine.

You can get around the problem by making the VM larger (larger disk) but then if you dump your GitLab VM you lose your Git repositories! Not a good plan.

So my approach? It is to mount additional, persistent disks to the docker-machine VM and then present those disks to the relevant containers.

Step 1 – Create a Persistent Disk

My “persistent disk” is simply a VDI created through VirtualBox in a known folder. Here’s the code:

# create local folder on host which can be shared with docker.

# see https://github.com/docker/machine/issues/1826 for mac osx description

if [ ! -s /srv/docker/sab-data-01.vdi ]; then

echo 'Create VDI...'

sudo mkdir -p /srv/docker

sudo VBoxManage createhd --filename /srv/docker/sab-data-01.vdi --size 65536 --format vdi --variant Standard

sudo chmod 777 /srv/docker/sab-data-01.vdi

fi

I create the VDI to be a max of 64GB – but it’s a dynamic disk so won’t be any bigger than is necessary.

Step 2 – Start / Attach docker-machine

When you run docker-machine, you very well can get a brand-new proxy VM created for you. So my next task is to make sure that docker-machine is running and then attach my VDI to it. Here’s some code:

# are we started?

l_docker_machine=$(docker-machine ls --quiet)

if docker-machine status $l_docker_machine 2>/dev/null | grep --quiet -e 'Stopped'; then

echo 'Start Docker machine...'

docker-machine start

l_docker_machine=$(docker-machine ls --quiet)

fi

# are we attached?

l_needs_restart=0

if ! VBoxManage showvminfo $l_docker_machine 2>/dev/null | grep --quiet -e '/srv/docker/sab-data-01.vdi'; then

echo 'Attach to Docker...'

VBoxManage storageattach $l_docker_machine --storagectl "SATA" --device 0 --port 2 --type hdd --medium /srv/docker/sab-data-01.vdi

l_needs_restart=1

fi

Step 3: Initialize the Disk

On first-run, we have an uninitialized disk attached to the docker-machine VM. Let’s initialize it using good old ext4 (use anything you like; xfs, btrfs).

# do we need to init the disk?

l_sdb1_init=$(docker-machine ssh $l_docker_machine '! blkid /dev/sdb1 && echo 1' 2>/dev/null | grep UUID)

if [ x"$l_sdb1_init" = x ]; then

echo 'Initialize sdb1 on Docker...'

docker-machine ssh $l_docker_machine '(echo n;echo p;echo 1;echo;echo;echo w; echo q) | sudo fdisk /dev/sdb' 2>/dev/null

docker-machine restart $l_docker_machine

docker-machine ssh $l_docker_machine 'sudo mkfs.ext4 /dev/sdb1' 2>/dev/null

l_needs_restart=1

fi

l_sdb1_UUID=$(docker-machine ssh $l_docker_machine 'sudo blkid /dev/sdb1' 2>/dev/null | awk '{print $2}' | sed -e 's#^UUID="\([^"]\+\).*#\1#')

Once the disk is initialized, I have found I must restart the docker-machine VM:

if [ $l_needs_restart -eq 1 ]; then

echo 'Restart Docker machine...'

docker-machine restart $l_docker_machine

l_needs_restart=0

fi

Next – I must ensure that the disk is mounted. This *appears* to be persistent (remounted) across docker-machine VM restarts, but (of course) must be performed both on first-run as well as any time that the actual underlying docker-machine VM is recreated (which can happen regularly).

# we do need to mount the disk...check

if ! docker-machine ssh $l_docker_machine 'sudo mount' 2>/dev/null | grep --quiet -e '/dev/sdb1'; then

echo 'Mount /dev/sdb1...'

docker-machine ssh $l_docker_machine 'sudo mount /dev/sdb1 /mnt/sdb1'

fi

Finally – let’s initialize the folder structure on the disks. We want space for all the GitLab components: PostgreSQL, Redis, and GitLab itself:

# create our folders - logic is in there to clean out existing folders (you'd need a backup!)

l_needs_sab_gitlab_folders=$(docker-machine ssh $l_docker_machine '[ ! -d /mnt/sdb1/sab-gitlab ] && echo 1' 2>/dev/null)

if [ x"$l_needs_sab_gitlab_folders" = x1 ]; then

echo 'Create sab-gitlab folders....'

docker-machine ssh $l_docker_machine 'sudo mkdir -p /mnt/sdb1/sab-gitlab' 2>/dev/null

docker-machine ssh $l_docker_machine 'sudo mkdir -p /mnt/sdb1/sab-gitlab/postgresql /mnt/sdb1/sab-gitlab/redis /mnt/sdb1/sab-gitlab/data' 2>/dev/null

docker-machine ssh $l_docker_machine 'sudo mkdir -p /mnt/sdb1/sab-gitlab/postgresql /mnt/sdb1/sab-gitlab/redis /mnt/sdb1/sab-gitlab/data; sudo chmod -R 777 /mnt/sdb1/sab-gitlab' 2>/dev/null

fi

Create GitLab Containers

The next step is to create / restart our GitLab containers. I use three of them as mentioned above.

First – let’s make sure we can use the docker command (by evaluating the docker-machine environment):

# load docker command

eval $(docker-machine env)

In the following examples, I specify “volumes” (automatically-mounted host directories) from the VDI I configured on the docker-machine VM.

First – let’s start the PostgreSQL container. Our logic checks to see if we already have the container and – if so – we restart it:

# launch postgresql or restart - important to provide pg_trgm extension

if docker ps -a -f 'name=sab-gitlab-postgresql' 2>/dev/null | grep --quiet -e 'sab-gitlab-postgresql$'; then

echo 'Start postgresql...'

docker start 'sab-gitlab-postgresql'

else

echo 'Run postgresql...'

docker run --name sab-gitlab-postgresql -d \

--env 'DB_NAME=gitlabdb' \

--env 'DB_EXTENSION=unaccent,pg_trgm' \

--env 'DB_USER=gitlab' --env 'DB_PASS=password' \

--volume /mnt/sdb1/sab-gitlab/postgresql:/var/lib/postgresql \

sameersbn/postgresql:latest

fi

Next – let’s do the same thing for Redis:

# Launch a redis container

if docker ps -a -f 'name=sab-gitlab-redis' 2>/dev/null | grep --quiet -e 'sab-gitlab-redis$'; then

echo 'Start redis...'

docker start 'sab-gitlab-redis'

else

echo 'Run redis...'

docker run --name sab-gitlab-redis -d \

--volume /mnt/sdb1/sab-gitlab/redis:/var/lib/redis \

sameersbn/redis:latest

fi

And, of course, we want to run GitLab. Note that I’m publishing ports 10080 (Web) and 10022 (for SSH access). Also, note that I use the --link option to connect the GitLab container to the Redis and PostgreSQL containers:

# launch gitlab

if docker ps -a -f 'name=sab-gitlab' 2>/dev/null | grep --quiet -e 'sab-gitlab$'; then

echo 'Start gitlab...'

docker start 'sab-gitlab'

else

echo 'Run gitlab...'

docker run --name sab-gitlab -d \

--link sab-gitlab-postgresql:postgresql --link sab-gitlab-redis:redisio \

--publish 10022:22 --publish 10080:80 \

--env 'GITLAB_PORT=10080' --env 'GITLAB_SSH_PORT=10022' \

--env 'GITLAB_SECRETS_DB_KEY_BASE=long-and-random-alpha-numeric-string' \

--volume /mnt/sdb1/sab-gitlab/data:/home/git/data \

sameersbn/gitlab:latest

fi

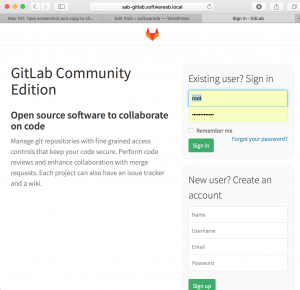

And it all works. I’ve taken the liberty of adding a new /etc/hosts entry that points to sad-gitlab.softwareab.local.

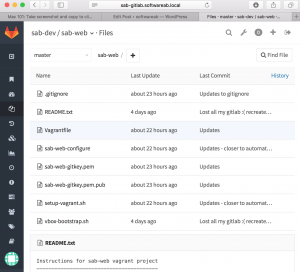

And here’s my web page project:

That is all.

Leave a Reply